☰

A website is reinforced using a series of procedures known as search engine optimization, or SEO, to rank higher in relevant searches.

SEO is everything you do to increase the likelihood that your website will appear when someone searches for a keyword connected to it.

There are parts of SEO, like on-page optimization, off-page optimization, and technical SEO.

You may have a solid strategic plan for on-page and off-page, but you can not miss work on your technical part. The most neglected part of SEO is yet a critical process.

Here you will learn what and how to optimize your Technical SEO for Hotels:

Technical SEO is making your hotel's website more discoverable and load-friendly for Search engines such as Google, Bing, and DuckDuckGo.

These minor adjustments to your website can significantly increase online traffic and web browser rankings.

The process of optimizing a hotel's website for SEO can indeed be complicated. Still, it can be made simpler by focusing on a few essential "high-value" components, which we will discuss below.

Remember that this article is more concerned with the technical elements of your hotel website than with activities like keyword research, link building, and generating relatable content.

Hotels that focus on SEO will be far more likely to be discovered online by potential tourists or promoters:

People searching for your hotel know what they are looking for and want to see what your location offers. Valuable content is needed.

With the right tool, you can quickly analyze technical SEO for hotels. It will mention what your website lacks or what needs your attention.

Let’s have a look at the checklist-

Any SEO specialist will tell you that a sitemap on your website is essential.

The most relevant pages on a website are included in a sitemap, ensuring that search engines can locate and scan them. Sitemaps also aid in understanding the layout of your website, making it simpler to explore.

To be precise, there are two types of sitemap: HTML and XML. HTML is for the visitors and guests visiting the website. XML is for search engines, bots, and other technical parts.

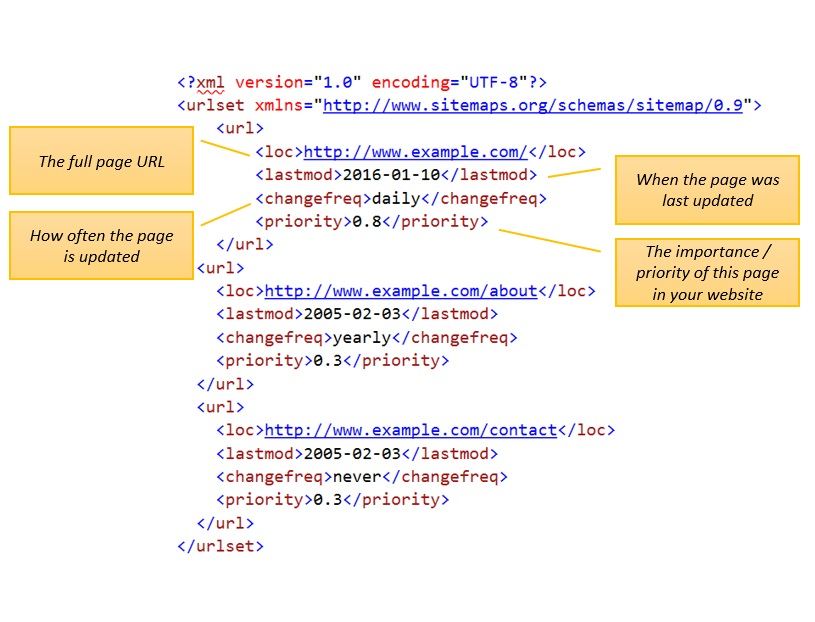

A. XML Sitemap

Source: XML sitemap generator

The full URL and uncompressed file size for an XML sitemap are 50,000 and 50MB, respectively.

When you reach either limit, you must divide your URLs among numerous XML sitemaps.

A single XML sitemap index file may then be created from these sitemaps. All other sitemaps are based on your sitemap index.

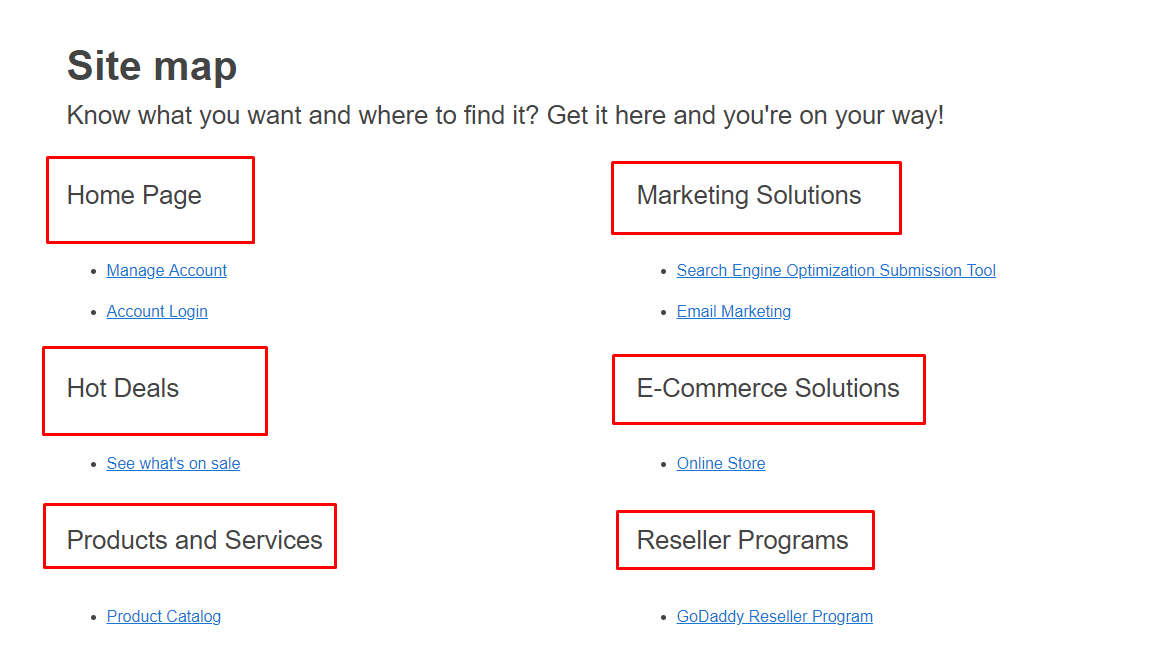

B. HTML Sitemap

Source: Serpstat

The second main kind, HTML sitemaps, may be read by website users and assist them in navigating to a specific page.

They are more beneficial for the user experience. Website footers typically contain links to HTML sitemaps.

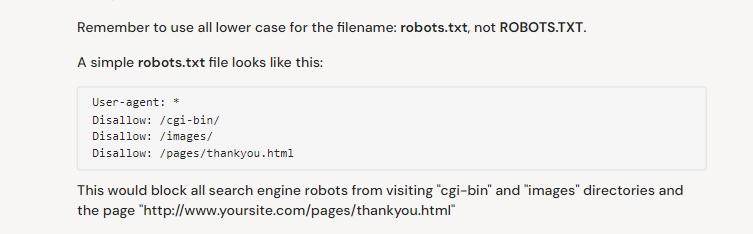

Source: SEOsitecheckup.com

The robots.txt file, like every other website file, is hosted on the web server.

In addition, the robots.txt file can usually be accessed by inputting the complete URL for the homepage followed by /robots.txt, such as https://www.innsight.com/robots.txt.

However, because the file isn't related to anyplace elsewhere on the site, visitors are unlikely to come across it.

However, most web crawler bots will check before indexing the rest of the site.

A robots.txt file can offer instructions for bots, but it cannot enforce those guidelines.

Before accessing any other pages on a domain, an intelligent bot, such as a web crawler or a news feed bot, will try to examine the robots.txt file and will follow the guidelines.

A poor bot will skip the robots.txt file or parse it in search of the banned URLs.

A web crawler bot will follow the most explicit guidelines in the robots.txt file. The bot will obey the more precise command if the file contains inconsistent orders.

Page speed time is a measurement with the metrics indicating the loading speed. In addition, it includes its core web vitals depending on the website.

Core Web Vitals

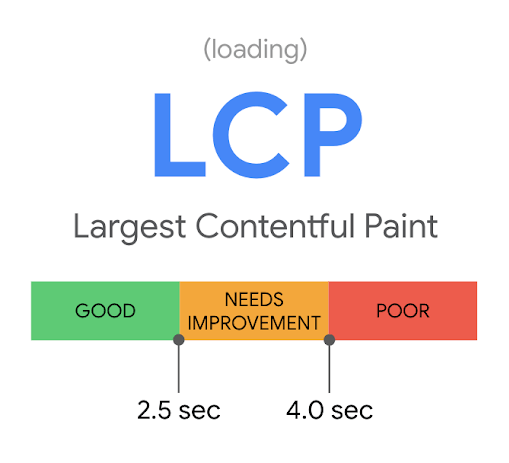

LCP (Largest Contentful Paint):

Largest Contentful Paint is a phrase that refers to how quickly a website loads.

The most significant content component on the page is measured by the Largest Contentful Paint, instead of how long it takes for a website to load fully. It may be a picture, a movie, an animation, a game, etc.

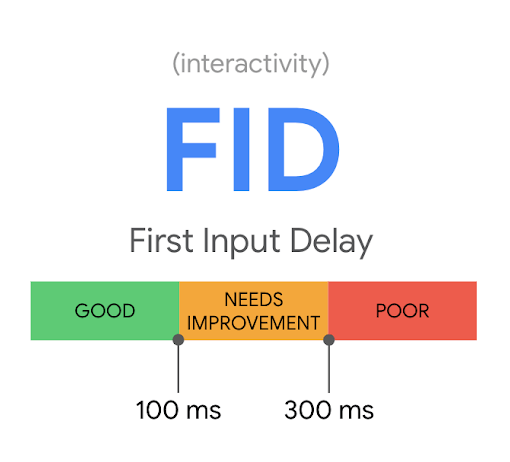

FID (First Input Delay):

A test called Initial Input Delay is used to determine how long it takes for browsers to recognize a user's first interaction with a website.

A faster reaction time produces a better, more professional, fulfilling user experience.

Because websites are getting increasingly sophisticated, browsers are trying harder than ever.

The first input delay is the amount of time it takes for a browser to respond to mouse clicks, touchpad taps, and keystrokes.

Anything with a First Input Delay score of under 100 ms receives a "Good,"

100 ms to 300 ms receives a "Needs Improvement,"

and anything beyond 300 ms gets a "Poor" rating.

Source: Marketmuse.com

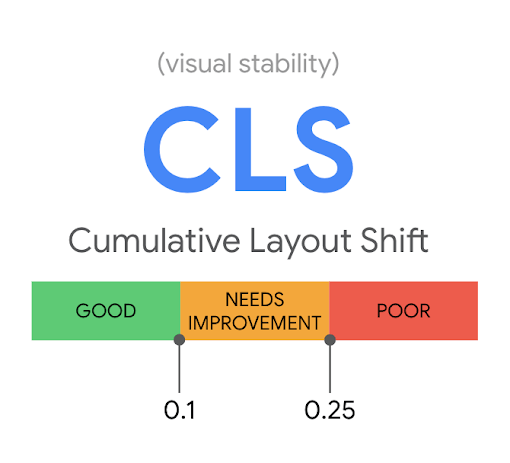

CLS (Cumulative Layout Shift):

The Cumulative Layout Shift determines how many visible items on a page move during page loading.

It takes many frames from the loading page to calculate when and how much the items change.

A score is considered "Good" if it is less than 0.1,

"Needs Improvement" if it falls between 0.1 and 0.25,

and "Poor" if it is more significant than 0.25.

A helpful resource for identifying any CLS problems with a site is Google's PageSpeed Insights. Unfortunately, most layout changes are caused by adverts, which are a significant source of income for website owners and often cannot be uninstalled.

Source: https://web.dev/

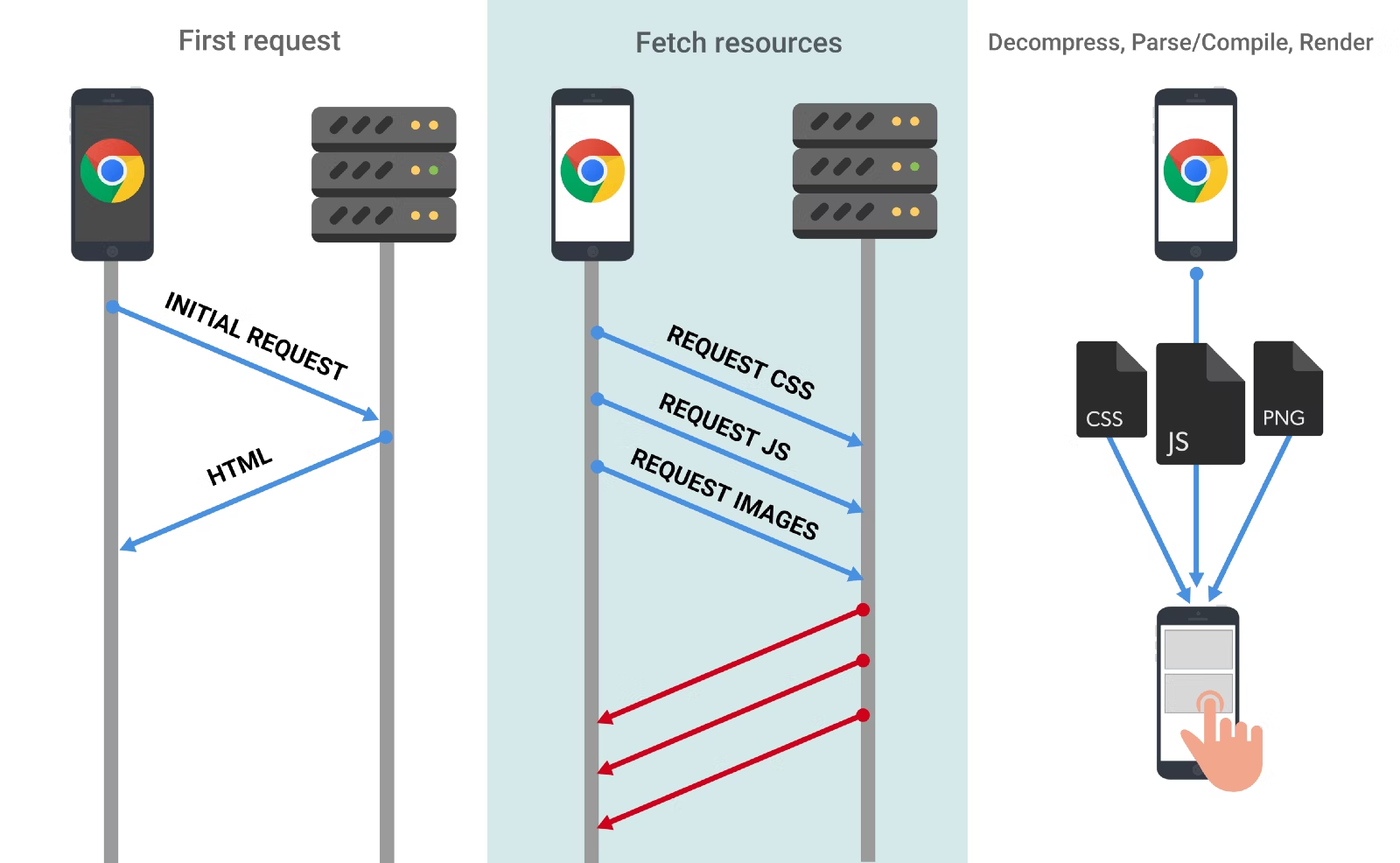

JavaScript is not as widely used in website creation as HTML and CSS. SEO concerns are one of the causes of it.

JavaScript, if improperly used, may prevent some of your content from being indexed. To see your website as users do, Google must show JS files; if it does not, it will index your page without the JS-powered parts. If non-indexed information is necessary for satisfying user intent, this might subsequently hurt your rankings.

Due to their size, JavaScript files can drastically slow down a page's loading time. Higher bounce rates and poorer ranks may follow from this.

CSS is then implemented in two ways:

Inline. When doing this, each HTML element that needs styling will receive a unique addition of the style attributes. Due to its lengthy nature, this procedure is hardly applied.

Internally. The style element is introduced to the page's head section to specify types for the entire website. When offering some landing pages with a distinctive design, utilize this technique.

CSS is most frequently implemented via an independent stylesheet with the.css extension. In addition, the page's head section contains a link to the file.

This approach is expected since it enables the style of an entire website to be defined in a formal statement.

Browsers and Google must fetch these resources for the page's content to be rendered appropriately. Occasionally, Google and browsers—or simply Google—fail to load the files, and both CSS and JavaScript share the exact causes for this.

In some less rare situations, Google and browsers can get the files, but they run too late, degrading the experience for users and potentially slowing down website crawling.

Launching a website audit is a simple approach to finding any CSS and JS-related problems on your site.

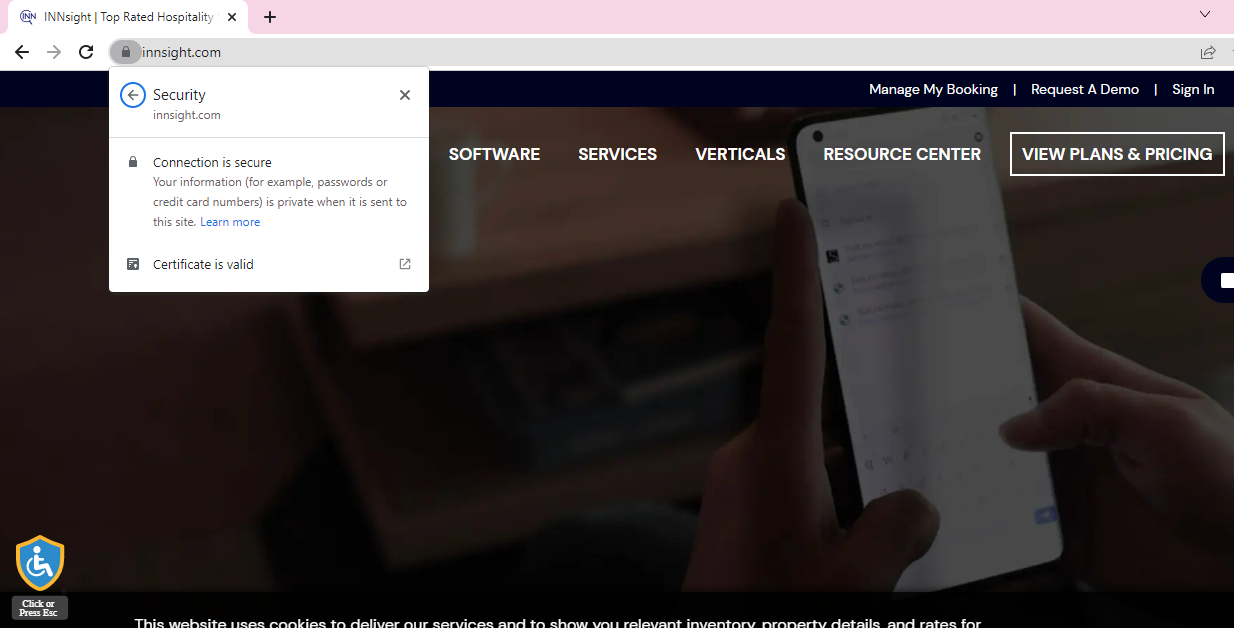

Getting an SSL Certificate is quick and straightforward, and the SEO increase is seen immediately, unlike establishing backlinks and evaluating on-page content, which takes time.

Now is the moment to purchase an SSL Certificate for search rank increase, as Google is pushing for a safer Internet by providing sites employing SSL an SEO ranking boost.

Utilize this simple method to outrank your competitors' websites that do not have a safe browsing experience.

Source: WooRank

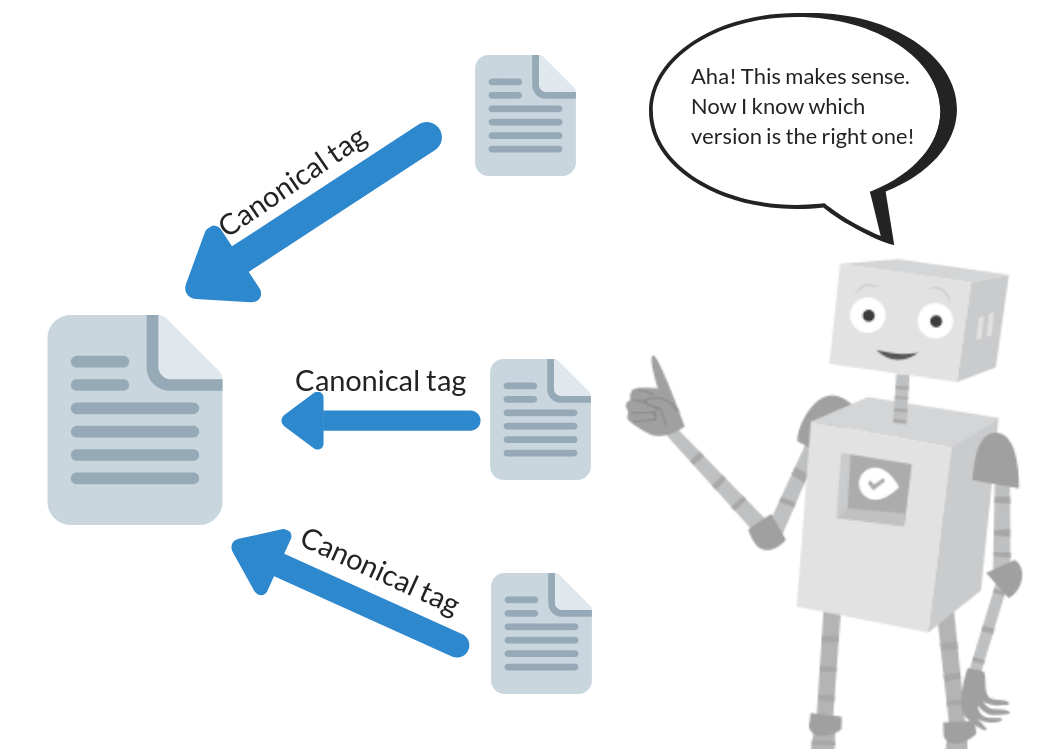

The primary aim of the canonical tag is to notify search engines which page is the leading, original version and which are merely duplicates that appear the same.

Websites often contain at least a few duplicate pages or pages that display the same information but have different URLs.

In these situations, Google will grab which page to index and rank since it won't utilize all the pages as results pages because they are all the same or highly similar.

For instance, it should look like this –

To maintain your rankings and make it easier for search engines to grasp your changes, redirects must be handled carefully.

301 Redirect - Moved Permanently

301 Redirect shows the user that the resource has been moved and must use the new URL for future purposes.

For example, when search engines detect a 301 redirect, the old page's rating is transferred to the new one.

When selecting to execute a 301 redirect, you must exercise caution before making any changes. If you reconsider your decision and disable the 301 redirects, your previous URL may no longer rank.

Swapping the redirects will not help you return the old page to its prior ranking position. The essential thing to know is that a 301 redirect cannot be reversed.

302 Redirect - Found

This redirect signifies that the page a user is seeking was discovered on some other URL in HTTP 1.1 but was temporarily relocated to HTTP 1.0 as per the changes.

307 Redirect - Temporarily shift

A 301 redirect in HTTP 1.1 indicates that the resource has been temporarily relocated and that the user must use the primary resource's URL for future visits.

For SEO purposes, the client should use a redirect, but search engines should not change their links in the SERPs to the new, temporary website.

PageRank is not transferred from the old resource to the new one in a 307 redirect, as it is in a 301 redirect.

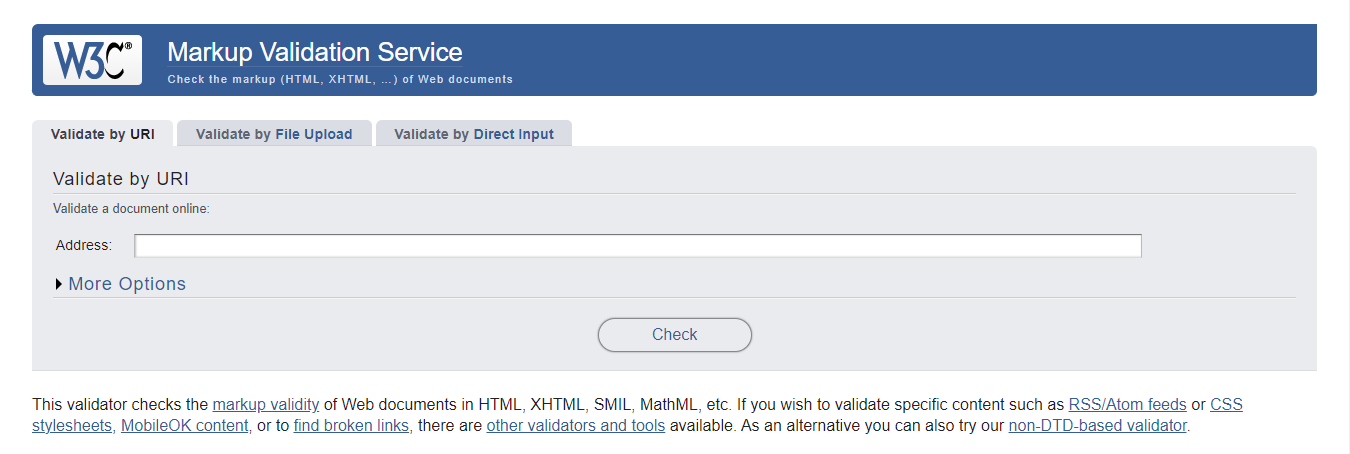

Source: https://validator.w3.org/

This web standards center creates coding requirements for web standards worldwide.

It also provides a validator service to check whether your HTML and other code are correct or not.

One of the essential things you could do to ensure cross-browser and cross-platform compliance and create an accessible online experience for all is to ensure that your website validates.

Incorrect coding can cause visual issues and lengthy processing or response time.

Said, if your code does not function properly across all major web browsers, it can negatively affect the user experience and SEO.

Google does not bother with how you write your code. That is, a W3C validation error will not result in a reduction in your rankings.

Google, on the other hand, still promotes code validation since it:

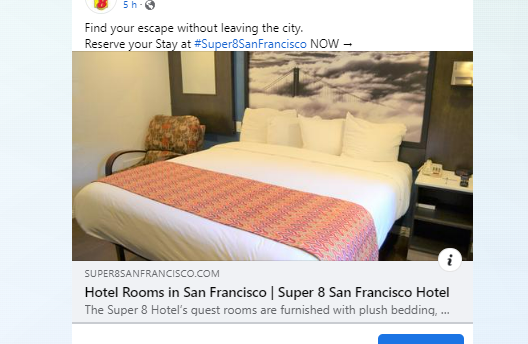

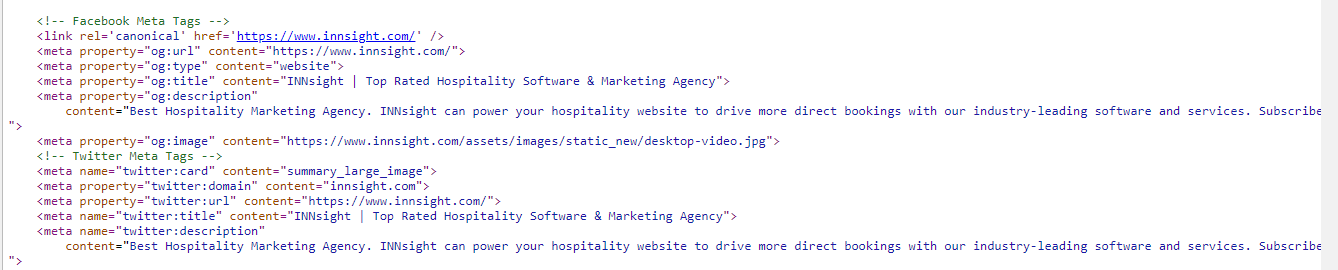

The Open Graph protocol specifies what content is visible when posting links on social media. Therefore, any website incorporating Open Graph tags will become a rich resource in the social graph.

For example, the Open Graph standard lets you choose which picture, title, and description appear when you share a link on networking sites.

Without Open Graph, social media networks can arbitrarily select an image, title, and description.

Open Graph tags are accepted by social networking sites such as Facebook, Twitter, and LinkedIn. On the other hand, Twitter employs meta tags known as Twitter Cards. However, Open Graph will be applied whenever there are no Twitter Card tags.

This is how it looks with the meta properties you use.

And code-wise, this is how it appears:

Why is it related to SEO, and how does it help? First, look at the points below to understand the technical SEO behind this.

The Open Graph Protocol enhances user experience by optimizing linked content. It expands the visibility of your content, adds to its appeal, and aids in the attraction of clicks.

For instance:

OG tags notify online social networks what content to present when someone uploads your page, which helps them analyze your content.

For example, publishing a link on Facebook is unprofessional if the image is absent or the headline is inaccurate.

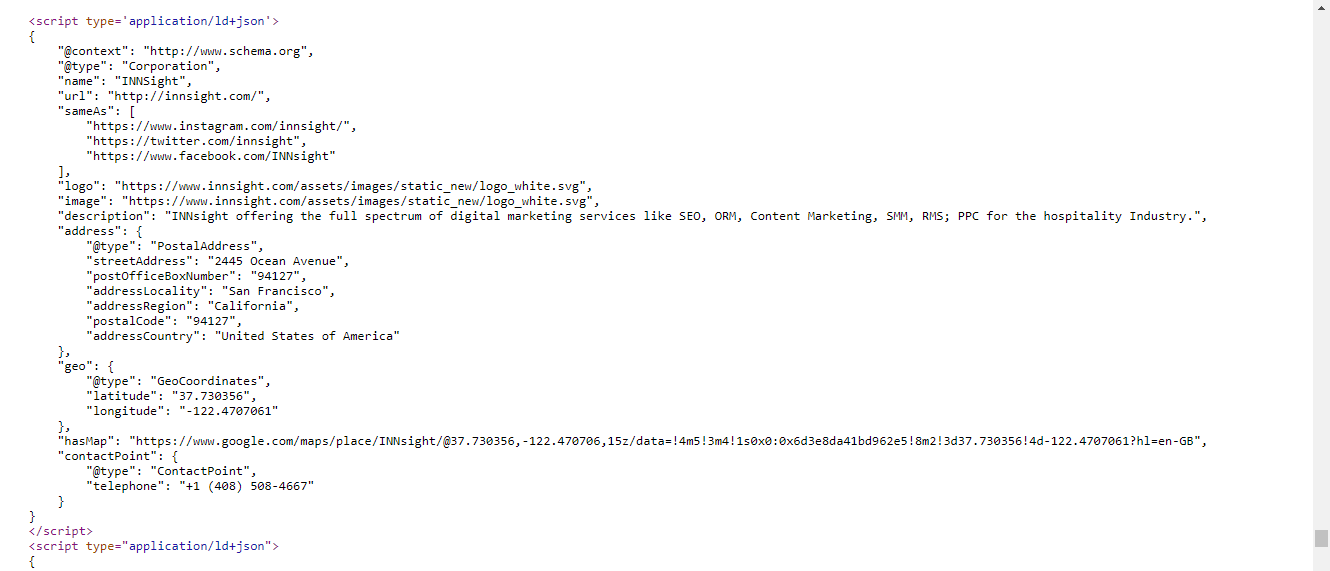

Search engines use a unique semantic vocabulary called "schema markup," otherwise known as "structured data."

Code offers more detailed data to search engines to allow them to interpret your content.

As a result, the rich snippets displayed below the page title offer consumers richer, more factual data.

Search results with schema implemented to inform the user quickly.

The user may find the details of your site at a glimpse, allowing them to determine whether to click through or move on to a more appropriate one.

This knowledge also assists your website in ranking higher for all types of content, being found, and receiving more hits.

More than five schema types are available per your industry and what kind of content you want to display.

Once you've dug into the code, you'll want to locate the section of your website that discusses what your company has to offer.

The disadvantage of utilizing microdata is that you must identify every single object inside the body of your webpage. As you may guess, things can soon spiral out of hand.

Before you add schema to your web pages, you must first determine the 'item type' of the information on your webpage.

An example is right below, explaining the back and front to understand better.

This is how schema looks in the code

and this is how schema looks on Google search results.

Let’s look at the most frequently used questions related to Technical SEO.

A. The above checklist has covered every potential factor and will help you determine whether your website meets the procedure in technical terms.

A. Technical SEO is necessary since it assures that your website is straightforward and clear of technical difficulties that prevent search engines from understanding and ranking it. Therefore, you should use technical SEO to acquire online visibility and convert it into consumers.

A.

And many more factors to look upon; read this blog for a clear vision for SEO tips - How Hotels and Restaurants Can Improve Their Ranking in Search Engines with Simple On-Page Optimization?

A. Include your primary keyword upfront in your content. Create original titles, descriptors, and information. Boost Your Site's Load Time, Use Google Search Console to Monitor Your Performance, Refine Images for SEO, Use Internal Linking, Create Backlinks To Your Website, and Enhance Your Site's User Experience.

A.

A. Various circumstances might cause your Google rankings to decline. Drops are most usually triggered by modifications made to the website. Still, they may also be driven By a new update, technical challenges, enhancements made by competitors, SERP's new features, or a Google penalty.

With The INNsight SEO Team's Support, you can boost direct traffic and reservations to your website.

A. SEO usually only needs a little code. You can accomplish an excellent job of SEO without entering any code. But the broader explanation is that knowing how programming operates, as well as being able to perform some coding, is always helpful talent to have.

A. Multilingual SEO improves your website's content in numerous languages to appear in developing markets and by users in other countries via organic traffic.

If you want our team to help you achieve your marketing goals and drive more direct revenue, contact us today!

By submitting your email address, you confirm that you would like to receive marketing emails from INNsight. In addition, you agree to the storing and processing your data by INNsight as described in our privacy policy.